Source: http://theconversation.com/

Source: http://theconversation.com/

The Voice Assistant

Apple and Microsoft have, over the years, established their AI persona in the market. Apple's Siri has changed the way users search for information over the web drastically. It hit the market so fast and so abundantly that Google has to now compete with Wolfram Alpha's search (which Siri uses). Microsoft has Cortana – a beloved AI character from the Halo series – that now lives on Xbox, Windows mobile, and Windows 10 machines.

Google has taken a different inherent stance on AI. Google's mobile voice assistant is simply named "Assistant" and doesn't respond to personal questions or give sassy answers. Google Assistant simply searches and displays relevant information. This has actually been very beneficial for Google because giving users too much power with their voice activated search assistants has actually diminished the quality of information AI returns.

It is a unique interaction users have with their voice activated assistants, though. Most of the time, users have to hold down a physical button to prompt their assistant, then, as they look at the device's screen, see the assistant screen pop up. This lets the user know to release the physical button and then wait for the voice assistant to make an indicating noise that it is ready to listen to the user. The real interaction begins when the user begins to speak. Usually, it is a form of a question about basic information. The user then can only hope to be presented what they were looking for.

Sometimes users get angry at their devices for not understanding them or they have a tone of urgency when they are looking for the closest hospital or even burger restaurant. The assistants do not differentiate tones, body movements, or facial expressions at all – this is a roadblock for artificial intelligence. Another great roadblock for voice AI is common human speech patterns. When a user prompts their voice command, sometimes the user has to remember what they wanted to say, so they stutter, emphasize a letter (meaning "theeeeee", for example), or most commonly say "uh". Voice assistants are not great at handling these common patterns yet, so every prompt must be well spoken and flawless – very nonhuman.

Virtual Reality

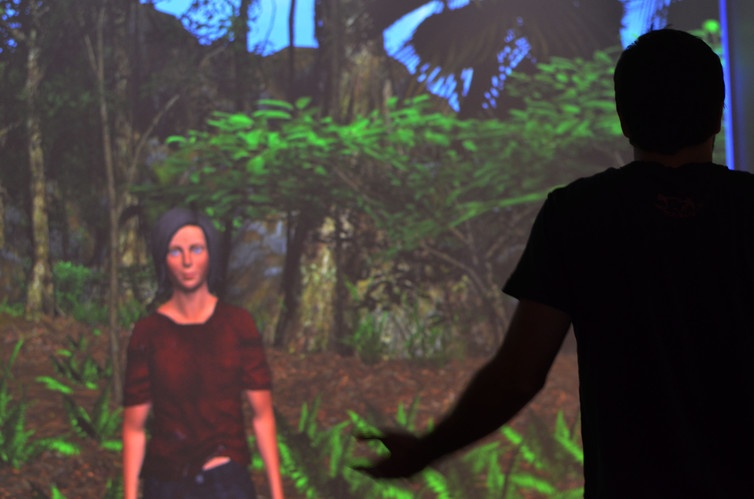

The creation and popularization of VR devices such as the HTC Vive and Oculus Rift have created a whole new medium of interaction between user and device. Users are no longer just observing what is in front of them, but now have the illusion and suspension of disbelief they are actually in that world. Unfortunately, though, the interaction is still a one way experience. The user is inputting all the motions, looking into the screen, and the hardware/software is indifferent to the user.

Source: http://theconversation.com/

Source: http://theconversation.com/

The 4th Wall

Artificial Intelligence, Virtual Reality, Voice Search, and Augmented Reality must break the 4th wall in order to exponentially advance to suit human needs. As I like to put it,

"If thou gaze long into a VR headset, the VR headset will also gaze into thee".

It may be weird to think about, but further in AI development, virtual reality and voice will be an intimate experience. The user puts on a VR headset that sits on their face and looks directly into their eyes for the entire experience. This is a good opportunity to break the 4th wall and let the device "see" the player's emotions and respond. The player also stands in between two or more laser tracking cameras that watch their movement. Breaking the fourth wall, these cameras could evaluate the player's body language and respond as well. I recommend this read to further my explanation.

- This type of demand for a progressive, responsive AI in Virtual Reality is exactly why everyone should at least look into the field (hint hint). Though VR is seen as a gaming platform right now, the application for VR and AR will expand into every field. As a Human-Computer Interaction student, I realize I got very into this topic (sorry for the long read), but the importance of the issues I laid out will be the problems that we will all be looking for the answer to quite soon. I plan to go into specific details in a later blog about each subject. Will it be you that innovates AR, VR, and voice?